Key Takeaways

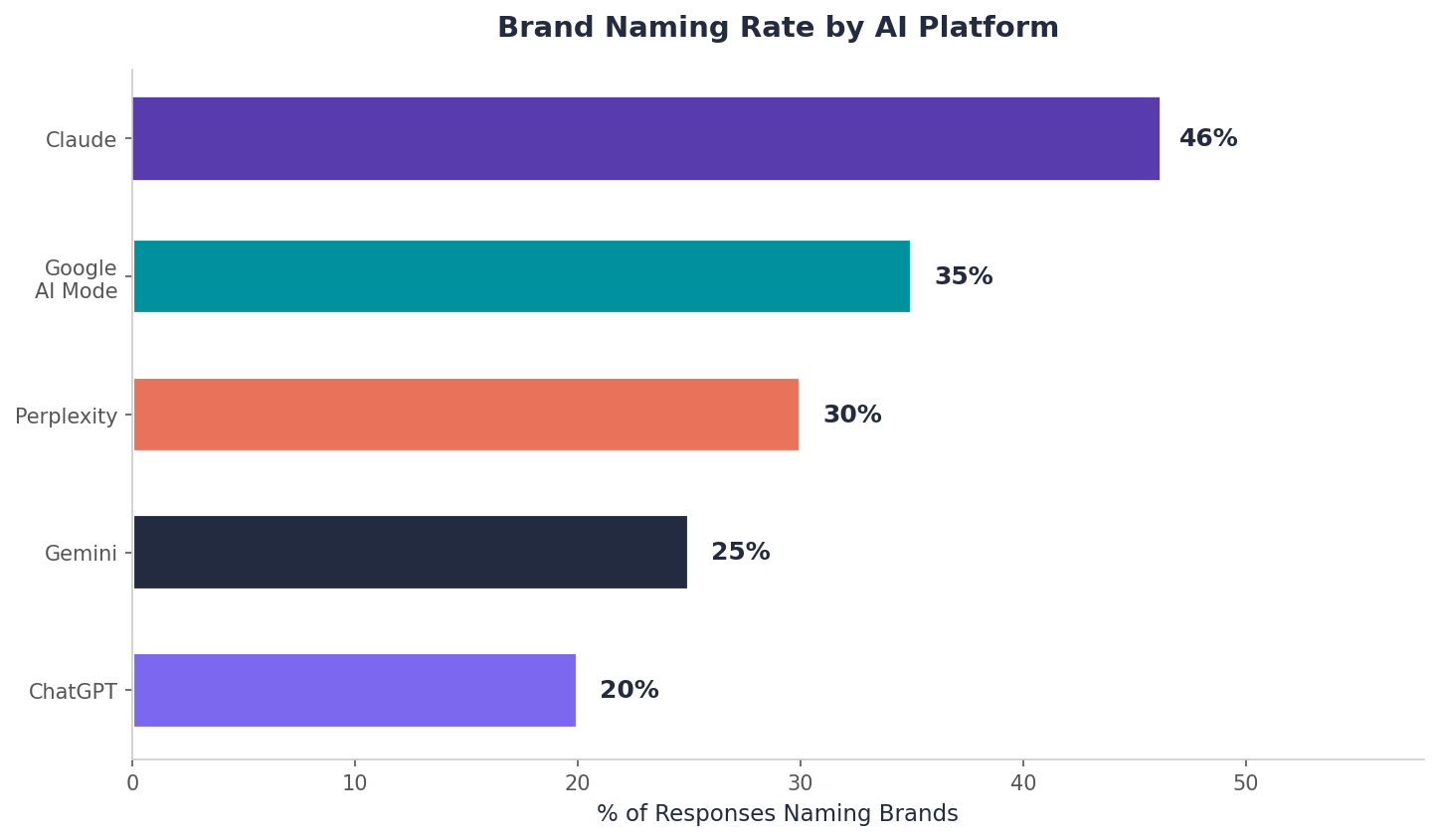

- Claude names a brand in 46% of responses, the highest rate of any AI platform, making it the most accessible front door for challenger brands.

- Claude cites owned-domain content at 9.1%, double ChatGPT's 4.5%, meaning your own site is a viable and preferred citation source on Claude.

- Utility-driven content (tool pages, error-fix guides, comparisons) dramatically outperforms editorial content in Claude citations.

- Position in Claude's narrative responses matters more than in traditional search; a positional gap from rank 3 to rank 7 can cost 18x or more in visibility.

- Claude builds topic-specific entity models, not general brand authority scores, so you will only get cited for topics where you have deep, consistent presence.

Is Your Content Is Invisible to Claude?

Your content team published 30 articles last quarter. Claude cited one of them. Not because the other 29 were bad. Because they were structured for an audience that reads in a way Claude does not.

That single citation happened because something about that page's structure, specificity, and placement matched how Claude's retrieval engine actually works. The other 29 articles may have been thorough, well-researched, even highly ranked on Google. None of that mattered, because Claude evaluates content through a fundamentally different lens.

Understanding that lens changes which articles get cited next quarter. This guide walks through what happens behind the curtain when Claude answers a question in your category, and which content decisions influence each step of that process.

But first, let's address why Claude deserves your attention at all.

Claude Is the Most Brand-Friendly AI Platform

If you are trying to figure out which AI platform to prioritize, start with this: Claude names a brand in 46% of its responses. That is the highest rate of any platform we track. ChatGPT, by comparison, leaves brands out of nearly two-thirds of responses.

For a challenger brand trying to break into AI visibility, this is the most accessible front door. One mid-market e-signature platform, for example, is nearly invisible on ChatGPT at 0.75% visibility but reaches 7.4% on Claude. That is a tenfold difference in exposure from the same content, simply because Claude is more willing to name names.

And the stakes keep climbing. According to Adobe, AI-referred visitors during the 2025 holiday shopping season converted at a rate 31% higher than average. These are not idle browsers. People arriving through AI recommendations are closer to a purchase decision, which makes every AI citation carry real revenue weight.

Understanding how Claude's retrieval works is the key to exploiting this advantage.

Two Sources of Knowledge: Long-Term Memory vs. Looking It Up

Claude generates answers from two distinct sources. Think of them as long-term memory and real-time research.

Pre-Training Knowledge (Long-Term Memory)

Pre-training knowledge is the massive corpus of text Claude was trained on. This encodes brand associations, product descriptions, and category relationships into the model's weights. Brands and concepts that appeared frequently and consistently across high-quality sources during training are more firmly embedded in Claude's base model.

This is like a person's general knowledge. If you ask someone "what companies make CRM software?" they will name the brands they have absorbed over years of reading, conversations, and experience. No Googling needed. Claude works the same way. Brands deeply embedded in training data surface without any retrieval step.

Real-Time Web Retrieval (Looking It Up)

Real-time web retrieval is when Claude actively fetches and reads web content to answer a query. When search is enabled (via Claude.ai Pro or the API with search tools activated), Claude runs its own search queries at response time, selects results, reads the retrieved content, and incorporates it into its answer.

This is the equivalent of someone pulling out their phone to look something up. They know the general topic, but they need a specific fact, a current price, or a detailed comparison. Claude reaches out to the web when the query demands specificity it cannot deliver from memory alone.

Why Both Sources Matter for Your Strategy

For most practical Claude SEO work, the retrieval layer is where your near-term effort goes. You can influence what Claude finds in real time by publishing and structuring the right content today.

But pre-training matters too. Claude's "mentioned without link" rate is 9.8%, higher than Perplexity's 5.6%. Those unlinked brand mentions come from pre-training knowledge, not retrieval. Your brand's presence in the training corpus is working in the background even when Claude does not cite a URL. Every time Claude mentions your brand without a link, that is pre-training doing its job.

Both sources feed the same output. Which brings us to a critical question: when your brand does appear in that output, where does it show up?

Why Position Matters More Than You Think

Most marketers focus on whether Claude mentions their brand. That is the wrong question. The right question is *where*.

Take a leading fintech platform we monitored. It averages position 2.21 in Claude's recommendations and captures over 50% visibility. A mid-size consulting firm at position 7.52 captures just 2.45%.

Let that sink in. In traditional SEO, the gap between rank three and rank seven costs you maybe 4x in click-through rate. In Claude's responses, the same positional gap costs you 18x or more in visibility.

Why the Gap Is So Extreme

This happens because Claude's responses are not lists. They are narratives.

The brands mentioned first get more context, more explanation, and more favorable framing. By the time a response reaches brand number six or seven, the reader has already formed their shortlist. The first-mentioned brand gets a paragraph of context. The seventh gets a brief aside that most readers will skip.

Position in Claude is not a vanity metric. It is the difference between being the recommendation and being an afterthought.

So how do you earn a higher position? It starts with understanding what Claude actually does when a user asks a question. Let us follow a single query through the entire retrieval process.

How Claude's Retrieval Works: 5 Steps, One Query

Here is what happens behind the scenes when a buyer types: "What is the best route optimization software?"

The Takeaway from All Five Steps

Each step is a filter. Your content needs to survive all five to earn a citation. A great page that uses inconsistent brand naming might fail at Step 1. A well-branded page with thin content might fail at Step 3. A detailed page that buries its answer in paragraph twelve might fail at Step 5, because Claude found a competitor's page that answered directly in the opening lines.

What Content Types Work in Claude

So what actually makes it through all five steps? Not what you expect.

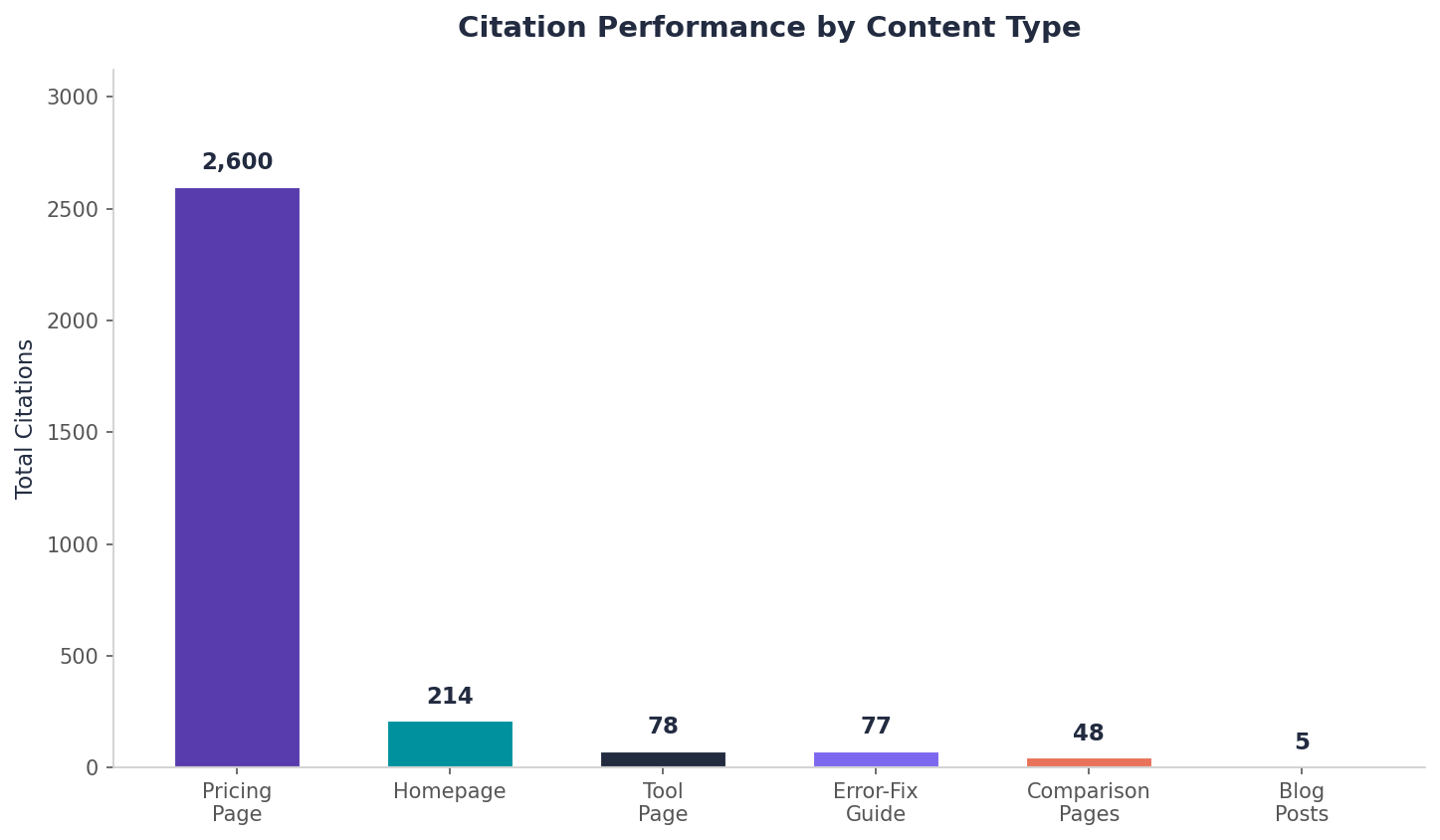

Most marketers assume their flagship blog posts or thought leadership articles earn the most citations. The data tells a different story. Utility-driven content outperforms editorial content by a wide margin.

Content Type Citation Performance

The Owned-Domain Advantage

Claude's 9.1% owned-domain citation rate, double ChatGPT's 4.5%, means investing in comprehensive, expert-level content on your own domain has a disproportionate payoff on Claude compared to other platforms.

But not all owned content performs equally. The hierarchy is clear:

- Utility-driven content (tools, troubleshooting, comparisons) earns citations at rates that compete with or exceed high-traffic landing pages

- Free tool pages can earn nearly as many citations as a homepage

- Error-fix guides can outperform product landing pages

The implication for your content strategy: do not just publish thought leadership. Build things people use. Then make sure those pages are structured so Claude can find, evaluate, and cite them.

What Claude Looks for in Citable Content

Beyond content type, specific characteristics determine whether Claude cites a page or skips it.

Direct answer placement

Claude reads the opening section first and decides early whether the content answers the query. If your article leads with background context, Claude often moves on to a page that answers directly, even if your content is more thorough overall. The answer needs to appear in the first 100 to 200 words. This is the single highest-leverage structural change most pages need.

Named authorship with verifiable credentials

Content attributed to a specific person with a linked author bio, LinkedIn profile, and ideally external bylines earns more citation weight than anonymous or team-attributed content. This parallels Google's E-E-A-T framework, but Claude applies it at the retrieval layer.

Structured data and clear formatting

Schema markup (Article, FAQ, HowTo, Person) gives Claude machine-readable context about your content. Pages with accurate schema are cited with better attribution than equivalent pages without it. FAQ schema appears to be extracted directly for short definitional queries. One important nuance: JSON-LD is not directly parsed by AI models during page fetch. According to tests by SearchVIU, schema works through index enrichment rather than direct extraction : SearchVIU). The practical implication is that schema still matters, but it influences Claude indirectly through how search indexes represent your page, not by being read at retrieval time.

Specific claims with supporting evidence

An article that says "in our analysis of 40 SaaS marketing stacks, 73% of attribution errors traced to UTM parameter stripping in Salesforce sync" gives Claude a citable data point. An article that says "marketing attribution is complex and depends on your stack" gives Claude nothing to work with. Specificity is citability.

Consistent brand framing

Your brand's positioning should read the same across every surface Claude might pull from. Inconsistent descriptions across your site, G2, Capterra, and press coverage create entity confusion. When Claude sees conflicting descriptions, it either picks the most common framing (which may not be yours) or hedges with vague language that helps nobody.

What Claude Does Not Prioritize

Knowing what does not work saves you time and budget.

- Keyword density. Claude reads for relevance and meaning, not keyword frequency. Topical depth and entity clarity matter far more.

- Paid placement. There is no ad-buy equivalent in Claude's retrieval layer.

- Recency alone. A two-year-old comprehensive guide with strong third-party validation outperforms a shallow post published this morning. Claude's unlinked brand mentions rely on pre-training knowledge, not publication dates.

- Social engagement metrics. Likes and shares do not move the needle.

- Domain age for its own sake. Age without depth is irrelevant.

Sentiment: Getting Cited Is Step One. How You Are Framed Is Step Two.

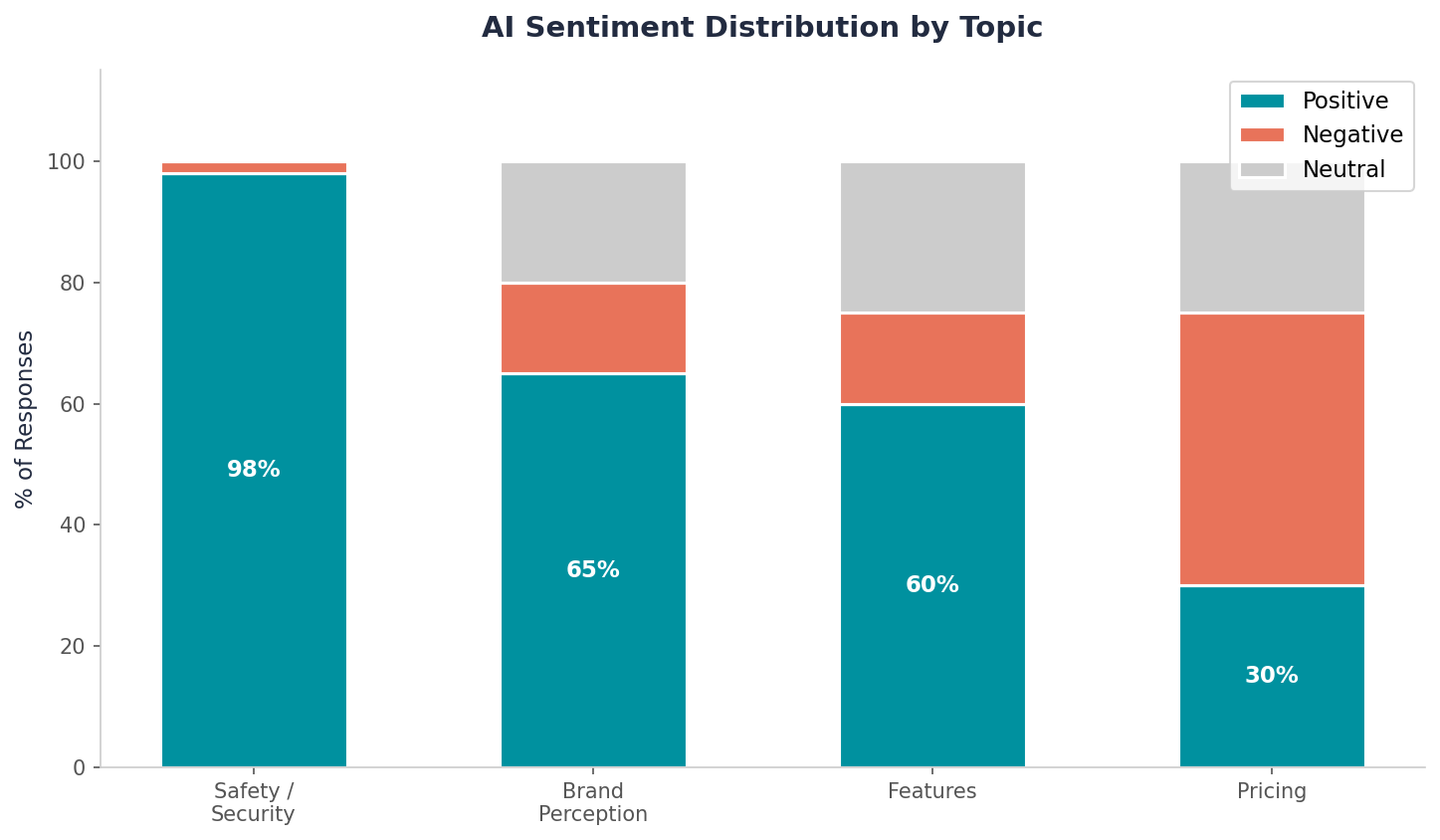

Claude does not just cite. It evaluates. Claude's citations carry sentiment, and that sentiment varies by topic. The average sentiment across brands is 65% positive. But this varies wildly by subject area.

Where Sentiment Runs Positive

Safety and security topics receive near-100% positive sentiment. When Claude cites a brand for email security, compliance, or data protection capabilities, the framing is almost universally favorable. This makes sense: security is a domain where authoritative, specific content naturally earns trust. If your brand publishes detailed, authoritative content in a trust-critical category, you will almost certainly receive positive framing.

Where Sentiment Turns Negative

Pricing transparency generates dominantly negative sentiment. When Claude discusses pricing and finds vague or unclear information, the framing turns critical. That leading fintech platform's pricing page earns thousands of citations partly because it provides the concrete pricing data Claude needs to give a neutral or positive answer. Companies that hide their pricing behind "contact us" forms do not just lose citations. They risk earning citations that frame them negatively.

The Sentiment Lesson

If you want positive sentiment in Claude's responses, publish specific, transparent content. Vagueness does not just reduce citations. It increases the chance that any citation you do receive carries negative framing. Every page on your site is a potential sentiment signal. Treat it accordingly.

What Transparent Content Looks Like vs. Vague Content

Claude evaluates sentiment when citing brands. Transparent, specific content earns positive framing. Vague content earns neutral framing at best.

Transparent content (earns positive AI sentiment):

- Published pricing with specific tiers and feature breakdowns

- Named customer results with timeframes: "reduced processing time by 40% within 90 days"

- Documented limitations alongside strengths: "works best for teams of 50 to 500"

- Self-service troubleshooting guides with specific error codes and fixes

Vague content (earns neutral or negative AI sentiment):

- "Contact sales for pricing" with no published tiers

- "Trusted by thousands of companies" with no named results

- Generic product descriptions without specific capabilities or metrics

- Marketing-heavy language with no verifiable claims

Topic-Specific Entity Models: The Culmination of Everything

Everything above converges here. Claude does not build general brand authority scores. It builds topic-specific entity models. This distinction changes your entire content strategy.

Even strong brands have blind spots. A security brand that achieves 17%+ overall visibility on Claude drops to just 1% mention rate for compliance-related queries. Claude simply does not associate them with compliance, despite their overall brand strength.

What This Means in Practice

Think about a restaurant technology platform that dominates conversations about POS systems but is invisible when users ask about kitchen display workflows. Or a logistics software company that earns citations for fleet tracking but gets zero mentions when the query shifts to last-mile delivery optimization. The brand is strong. The topical coverage is not.

Claude builds its understanding of your brand from the specific content you have published, the entities your pages connect to, and how consistently third-party sources reinforce those connections. You will get cited for the topics where you have built deep, consistent presence. You will be absent from topics where you have not, regardless of your overall brand strength.

How to Act on This

- Audit which topics Claude associates with your brand. Run queries across your category and adjacent categories. Note where you appear and where you do not.

- Decide which adjacent topics are worth investing in. Not every gap needs filling. Prioritize the topics that align with your product roadmap and buyer journey.

- Build deep, specific content for those topics. A single blog post will not shift Claude's entity model. You need a cluster of authoritative pages, consistent third-party mentions, and structured data that reinforces the association.

This is where every lever from the previous sections compounds. Direct answer placement helps Claude find your content (Step 1-3). Utility-driven formats earn citations (Step 5). Transparent, specific claims generate positive sentiment. And consistent brand framing across surfaces strengthens the entity model that determines whether Claude considers you an authority on a given topic at all.

Putting It All Together: A Priority-Based Playbook

Here is the practical playbook, organized by impact.

Priority 1: Restructure your top pages for answer-first formatting.

Move the direct answer to the first 150 words of every high-value page. This is the single highest-impact structural change you can make. It affects whether Claude even considers your content during retrieval.

Priority 2: Build or improve utility content.

Tool pages, error-fix guides, and comparison pages are Claude's preferred citation targets. If you do not have these content types, create them. If you do, make sure they are structured with clear answers up front.

Priority 3: Ensure named authorship everywhere.

Add real author bios with linked credentials to all content. Build bio pages for your subject matter experts. This feeds Claude's trust evaluation during retrieval.

Priority 4: Implement structured data.

Article, FAQ, HowTo, and Person schema give Claude machine-readable context it uses to evaluate and attribute your content. Remember that schema works through index enrichment, not direct parsing at fetch time, so it is still worth implementing even though Claude does not read JSON-LD directly.

Priority 5: Align brand descriptions across all surfaces.

Your website, G2, Capterra, Crunchbase, LinkedIn, and press coverage should all describe your brand and product in the same terms. Inconsistency weakens the entity signal Claude uses to identify and cite you.

Priority 6: Publish transparent, specific data.

Pricing, benchmarks, performance metrics, case study results. The more specific and transparent your content, the more likely Claude is to cite it, and the more likely that citation carries positive sentiment.

What This Means for Your Strategy Going Forward

Claude's retrieval layer is the most actionable part of this system for marketers. Content decisions you make today show up in citations within weeks, not model update cycles.

The pre-training layer is slower to influence, but not static. Every piece of content you publish, every mention you earn, every structured signal you lay down is feeding the next training cycle. This is long-game work, and it compounds.

Claude's retrieval process rewards what good marketing has always valued: substance, specificity, and consistency. The difference is that Claude makes these qualities directly measurable through citation rates, mention positions, and sentiment.

Now you know what happens behind the curtain. You know which content decisions influence each step. The question is no longer "how do I optimize for Claude?" It is "which of my pages need restructuring first?"

Where AI Citations Actually Come From: Our Cross-Category Data

We analyzed citation domains across five B2B categories in our Slate monitoring. Third-party platforms dominate everywhere.

In every category, the top competitor's domain generates more citations than the brand's own domain.

Ensuring Brand Consistency Across Platforms

Claude does not just read your website. It reads G2, Capterra, LinkedIn, Crunchbase, and every other platform where your brand appears. If your website says "AI-powered revenue intelligence platform" but your G2 profile says "sales analytics tool," Claude gets conflicting signals.

- Audit your brand description on G2, Capterra, LinkedIn, Crunchbase, and TrustRadius. Make sure the one-sentence descriptor matches your website verbatim.

- Update your CEO and leadership team LinkedIn bios to use the same category language as your homepage.

- Check partner and integration directory listings. These often use outdated descriptions.

- Create a brand vocabulary document and distribute it to every contributor and platform manager.

Work with TripleDart to Build Your Claude Visibility Strategy

Building content that earns Claude citations requires a systematic approach across content structure, entity consistency, schema implementation, and ongoing citation monitoring. TripleDart helps B2B SaaS companies build and execute this process end to end, from auditing your current Claude visibility to restructuring your content for retrieval and tracking results over time.

Book a meeting with TripleDart to start building your Claude citation strategy.

Frequently Asked Questions

Does Claude use live web retrieval for every query?

No. Claude uses retrieval selectively, typically for queries requiring current information or specific facts. Many responses draw entirely from pre-training knowledge.

What content formats does Claude retrieve most?

Structured, answer-oriented content: tool comparisons, step-by-step guides, error-fix documentation, and landing pages with specific claims.

How does Claude decide which source to cite?

It weighs topical specificity, source credibility, and how directly the content answers the query. Pages that answer in their opening lines have a structural advantage.

Can I tell if Claude is retrieving from my site?

Not directly. The practical proxy is citation monitoring: run target queries regularly and note when your domain appears.

Does content recency matter to Claude?

For retrieval-enabled queries, yes. For pre-training responses, recency is irrelevant until the next model update.

Does negative sentiment affect how Claude describes my brand?

Yes. Claude synthesizes tone from sources it reads. Critical coverage can shape how the model frames your brand. Publishing specific, transparent content is the most reliable way to earn positive framing.

Does JSON-LD schema get read directly by Claude during retrieval?

No. According to SearchVIU testing, JSON-LD is not directly parsed by AI models during page fetch. Schema markup works through index enrichment, meaning it improves how search indexes represent your page, which then influences what Claude retrieves. It is still worth implementing.

.png)

.webp)

.webp)

.png)

.png)

.webp)

.webp)

.webp)

%20(1).png)

.webp)

.webp)

.webp)

%20Ads%20for%20SaaS%202026_%20Types%2C%20Strategies%20%26%20Best%20Practices%20(1).webp)

.png)

.png)

.webp)

![Creating an Enterprise SaaS Marketing Strategy [Based on Industry Insights and Trends in 2026]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6965f37b67d3956f981e65fe_66a22273de11b68303bdd3c7_Creating%2520an%2520Enterprise%2520SaaS%2520Marketing%2520Strategy%2520%255BBased%2520on%2520Industry%2520Insights%2520and%2520Trends%2520in%25202023%255D.png)

.webp)

%20Agencies%20for%20B2B%20SaaS%20Compared%20(2026).webp)

.webp)

%20with%20Hubspot.webp)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.png)

.png)

.webp)

.png)

.webp)

![How to Measure AEO Success: 12 Metrics Beyond Clicks [2026 Framework]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0d664b326187e99b3d5960_6%20-%20The%20Ultimate%20Guide%20to%20Measuring%20AEO%20Success%20in%202026.png)

![7-Step Workflow for AEO-Ready Content [2026 Framework]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0d55ea88913ede1d3a7123_5%20-%20Workflows%20for%20Optimized%20AEO-Ready%20Content%20Creation.png)

.png)

![How to Structure Content for AEO and GEO [With Templates]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6a0c6a56eb700472e635ff33_1%20-%20How%20to%20Structure%20%20Content%20for%20AEO%20and%20GEO%20%20Summaries%20(2026).png)

.png)

.png)

.png)

.png)

%2520Agencies%2520(2025).png)

![Top 9 AI SEO Content Generators for 2026 [Ranked & Reviewed]](https://cdn.prod.website-files.com/632b673b055f4310bdb8637d/6858e2c2d1f91a0c0a48811a_ai%20seo%20content%20generator.webp)

.webp)

.webp)

.webp)